Configure an LLM integration

To calculate metrics using an out-of-the-box metric, or a custom LLM-as-a-judge metric, you will either need to configure an integration with an LLM, or set up the Luna-2 SLM. To configure an LLM, visit the relevant API platform to obtain an API key, then add it using the integrations page in the Galileo Console. If you are using a custom code-based metric then you don’t need an LLM integration.Experiment flow

The entry point for running experiments is a call to the run experiments function (see therun_experiment Python SDK docs, or the runExperiment TypeScript SDK docs for more details).

Experiments take a dataset, and can either pass it to a prompt template, or to a custom function. This custom function can go from a simple call to an LLM right up to a full agentic workflow. Experiments also take a list of one or more metrics to use to evaluate the traces. This can be one of the out-of-the-box metrics using the constants provided by the Galileo SDK, or the name of a custom metric.

For each row in a dataset, a new trace is created, and either the prompt template is logged as an LLM span, or every span created in the custom function is logged to that trace.

When you call your application code from an experiment, the experiment runner will start a new session and trace for every row in your dataset. You will need to ensure your application code doesn’t start a new session or trace manually, or conclude or flush the trace.If you are using the

log wrapper or a third-party integration, this is handled for you. If you are logging manually you will need to check to see if an experiment is in progress.See the Experiment SDK docs for details on how to do this.Run experiments with prompt template

A simple way to get started with experimentation is by evaluating prompts against datasets. This is especially valuable during the initial prompt development and refinement phase, where you want to test different prompt variations. Assuming you’ve previously created a dataset, you can use the following code to run an experiment:Run experiments with generated output

As of Galileo Python SDK v1.50.1+ — Bring your own data from any system (production logs, external LLMs, or manual curation) and evaluate it directly.

- Evaluating production output: Export traces from your live system, run quality metrics to find issues

- Comparing model providers: Collect output from OpenAI, Anthropic, and Gemini offline, then score them all in Galileo

- Regression testing: After improving your RAG pipeline, run the same metrics on new output to see if scores improved

- A/B testing: Run the same inputs through two different systems, put both outputs in datasets, compare metric scores

How it works

- Create a dataset with an

inputcolumn and agenerated_outputcolumn - Call

run_experimentwithout aprompt_template— Galileo detects thegenerated_outputcolumn automatically - Metrics are computed directly on the existing output — no LLM calls needed for generation

Example

This flow is currently supported in the Python SDK (v1.50.1+). TypeScript support uses the same API — omit

promptTemplate to use this flow.Python

Using this flow

You can use this flow in two ways:- Already have a Galileo dataset? Pass it directly to

run_experiment(...). - Have local Python data (e.g., a list of dictionaries)? First upload it with

create_dataset(...), then pass the returned dataset torun_experiment(...).

Dataset columns

Your dataset needs at minimum aninput column and a generated_output column.

| Column | Required | Description |

|---|---|---|

input | Yes | The user query or prompt input |

generated_output | Yes | The output from your AI system. Must have data in at least one of the first 100 rows. |

ground_truth | No | Expected answer — used by the Ground Truth Adherence metric. The SDK also accepts output as an alias for backward compatibility. |

metadata | No | Additional context for filtering or grouping |

Column naming: The SDK accepts both

ground_truth and output for the reference/expected answer column. Internally they map to the same field. In the Galileo Console and CSV exports, this column is displayed as “Ground Truth”. We recommend using ground_truth in new datasets for clarity.Flow determination

When you callrun_experiment:

- If you provide a

prompt_template, the prompt-driven flow is always used (even if the dataset has agenerated_outputcolumn). - If you omit

prompt_templateand the dataset has agenerated_outputcolumn with at least one non-empty value in the first 100 rows, the generated output flow is used. - If you omit

prompt_templateand the dataset has nogenerated_outputcolumn, or the column exists but has no non-empty values in the sampled rows, an error is returned.

Run experiments with custom function

Once you’re comfortable with basic prompt testing, you might want to evaluate more complex parts of your app using your datasets. This approach is particularly useful when you have a generation function in your app that takes a set of inputs, which you can model with a dataset. If your experiment runs code that uses thelog decorator, or a third-party SDK integration, then all the spans created by these will be logged to the experiment.

This example uses the log decorator. The workflow span created by the log decorator will be logged to the experiment.

Run experiments against complex code with custom functions

Custom functions can be as complex as required, including multiple steps, agents, RAG, and more. This means you can build experiments around an existing application, allowing you to run experiments against the full application you have built, using datasets to mimic user inputs. For example, if you have a multi-agent LangGraph chatbot application, you can run an experiment against it using a dataset to define different user inputs, and log every stage in the agentic flow as part of that experiment. To enable this, you will need to make some small changes to your application logic to handle the logging context from the experiment. When functions in your application are run by therun_experiment call, a logger is created by the experiment runner, and a trace is started. This logger can be passed through the application, accessed using the @log decorator or by calling galileo_context.get_logger_instance() in Python, or getLogger in TypeScript.

You will need to change your code to use this instead of creating a new logger and starting a new trace.

Get an existing logger and check for an existing trace

The Galileo SDK maintains a context that tracks the current logger. You can get this logger with the following code:Using third-party integrations with experiments

If you are using third-party integrations, there may be some configuration you need to do to make the integrations work with experiments. See the following documentation for more details:Custom function logging principles

There are a few important principles to understand when logging experiments in code.- When running an experiment, a new logger is created for you and set in the Galileo context. If you create a new logger manually in the application code used in your experiment, this logger will not be used in the experiment.

- To access the logger to manually add traces inside the experiment code, you can call

galileo_context.get_logger_instance()(Python) orgetLogger()(TypeScript) to get the current logger. - To detect if there is an active trace, use the

current_parent()(Python) orcurrentParent(TypeScript) method on the logger. This will returnNone/undefinedif there isn’t an active trace. - Be sure to handle cases in your application code where a logger is created or a trace is started, and make sure this doesn’t happen in an experiment, and the experiment logger and trace is used instead.

- Every row in a dataset is a new trace. If you create new traces manually, they will not be used.

- Do not conclude or flush the logger in your experiment, the experiment will do this for you.

Set metrics for your experiment

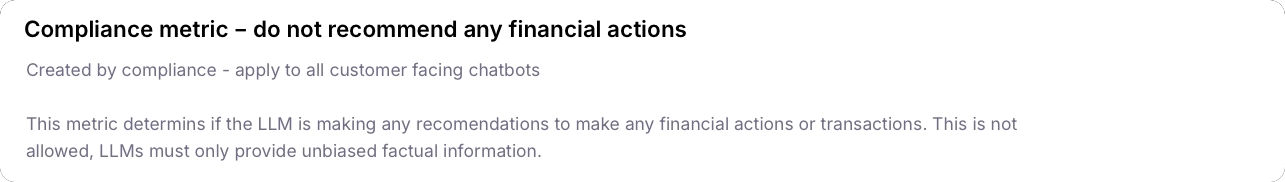

When you run an experiment, you need to define which metrics you want to evaluate for each row in the dataset. For out-of-the-box metrics, use the constants provided by the Galileo SDK."Compliance - do not recommend any financial actions":

Ground truth

For Ground Truth Adherence, you also need to set the ground truth in your dataset. This is set in theground_truth column.

If you set the

ground_truth column when using other metrics, the value is not used in the calculation of the metric, but can be added to the Galileo console under the “Dataset Ground Truth” column. This can be helpful for manual review.Custom dataset evaluation

As your testing needs become more specific, you might need to work with custom or local datasets. This approach is perfect for focused testing of edge cases or when building up your test suite with specific scenarios:Custom metrics for deep analysis

For the most sophisticated level of testing, you might need to track specific aspects of your application’s behavior. Custom metrics provide the flexibility to define precisely what you want to measure, enabling deep analysis and targeted improvement:Experimenting with agentic and RAG applications

The experimentation framework extends naturally to more complex applications like agentic AI systems and RAG (Retrieval-Augmented Generation) applications. When working with agents, you can evaluate various aspects of their behavior, from decision-making capabilities to tool usage patterns. This is particularly valuable when testing how agents handle complex workflows, multi-step reasoning, or tool selection. For RAG applications, experimentation helps validate both the retrieval and generation components of your system. You can assess the quality of retrieved context, measure response relevance, and ensure that your RAG pipeline maintains high accuracy across different types of queries. This is especially important when fine-tuning retrieval parameters or testing different reranking strategies. The same experimentation patterns shown above apply to these more complex systems. You can use predefined datasets to benchmark performance, create custom datasets for specific edge cases, and define specialized metrics that capture the unique aspects of agent behavior or RAG performance. This systematic approach to testing helps ensure that your advanced AI applications maintain high quality and reliability in production environments.Best practices

- Use consistent datasets: Use the same dataset when comparing different prompts or models to ensure fair comparisons.

- Test multiple variations: Run experiments with different prompt variations to find the best approach.

- Use appropriate metrics: Choose metrics that are relevant to your specific use case.

- Start small: Begin with a small dataset to quickly iterate and refine your approach before scaling up.

- Document your experiments: Keep track of what you’re testing and why to make it easier to interpret results.

Next steps

Experiments SDK

Use Datasets in Code

Learn about more datasets, the data driving your experiments.

Prompt Templates

Learn how to create and use prompt templates in experiments

Metrics

Metrics Reference Guide

A list of supported metrics and how to use them in experiments.

Local Metrics

Create and run custom metrics directly in code.

Custom Code-Based Metrics

Create reusable custom metrics right in the Galileo Console.

Custom LLM-as-a-Judge Metrics

Create reusable custom metrics using LLMs to evaluate your response quality