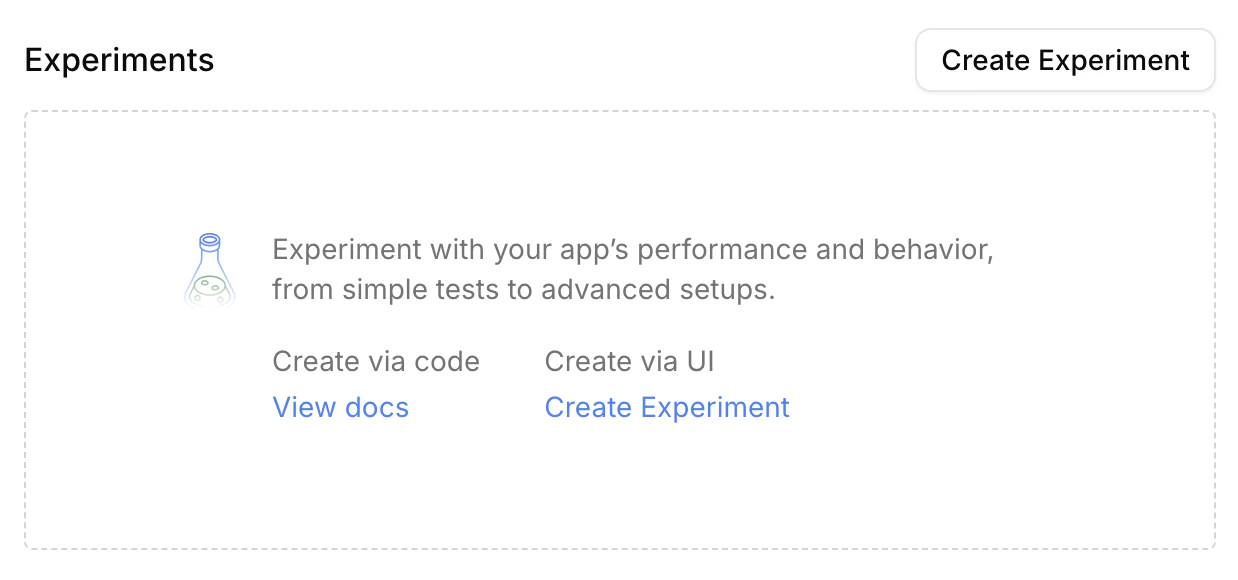

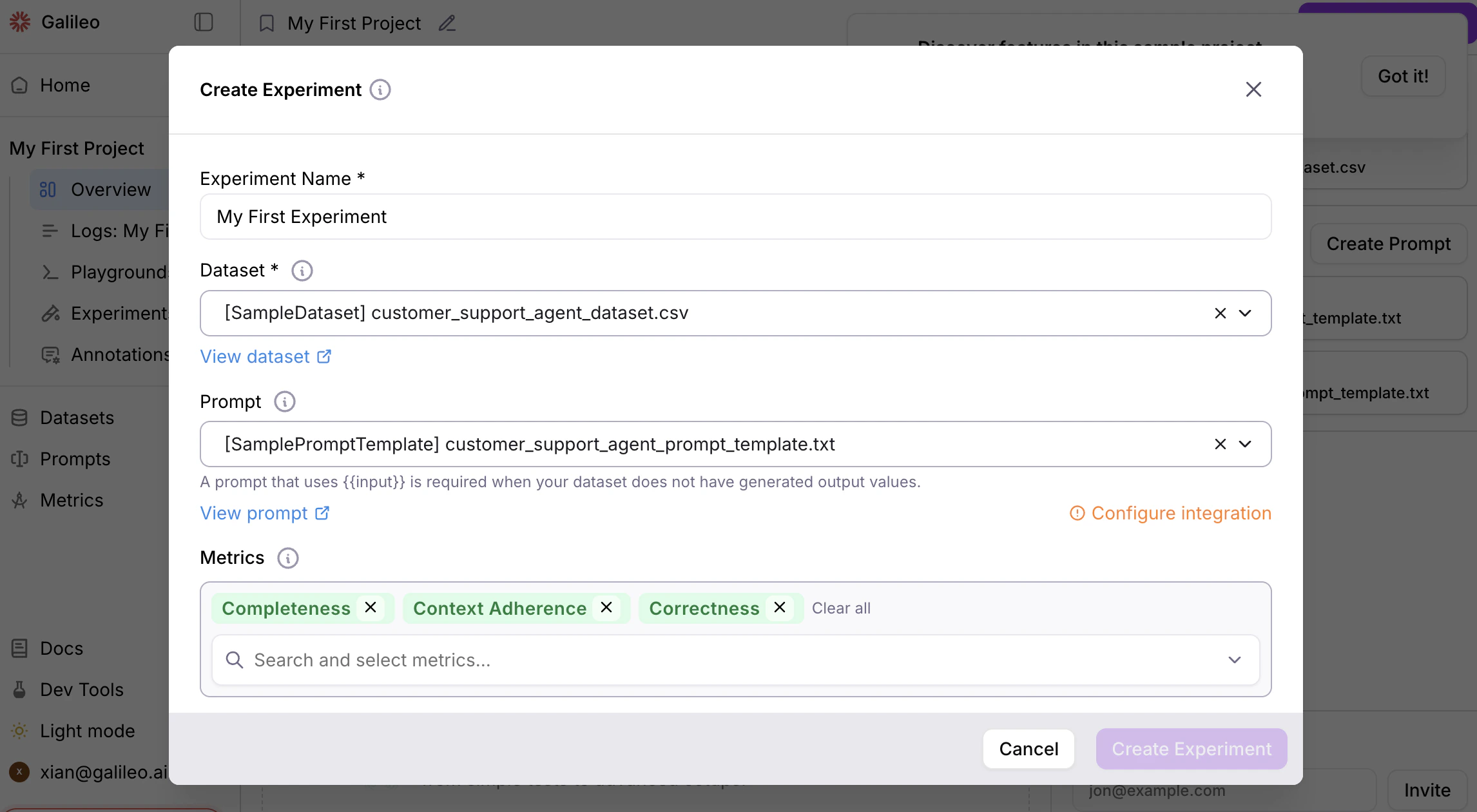

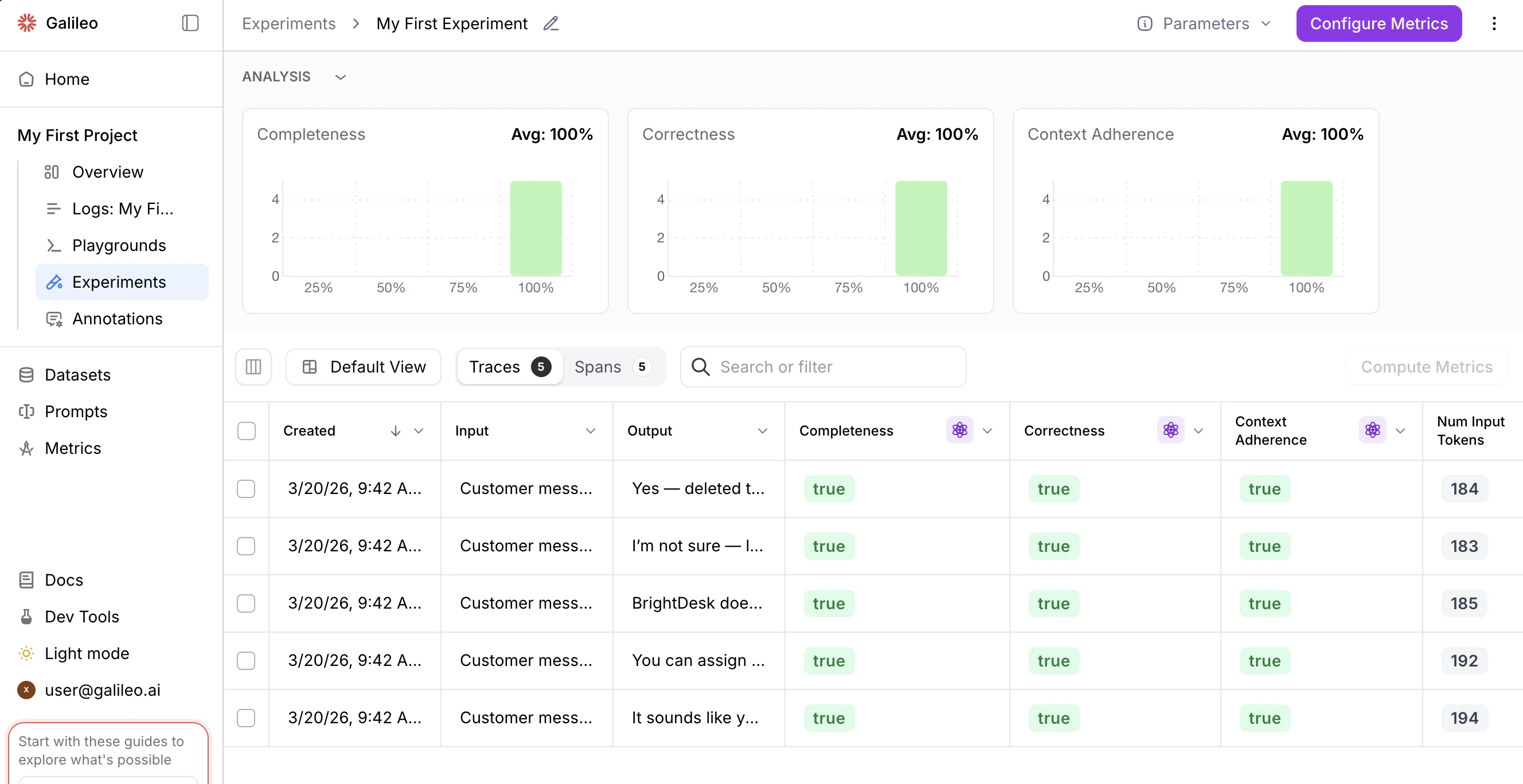

Run an experiment with UI

In the Galileo console UI, “Create Experiment” buttons allow you to easily add experiments to a project.

Run an experiment with code

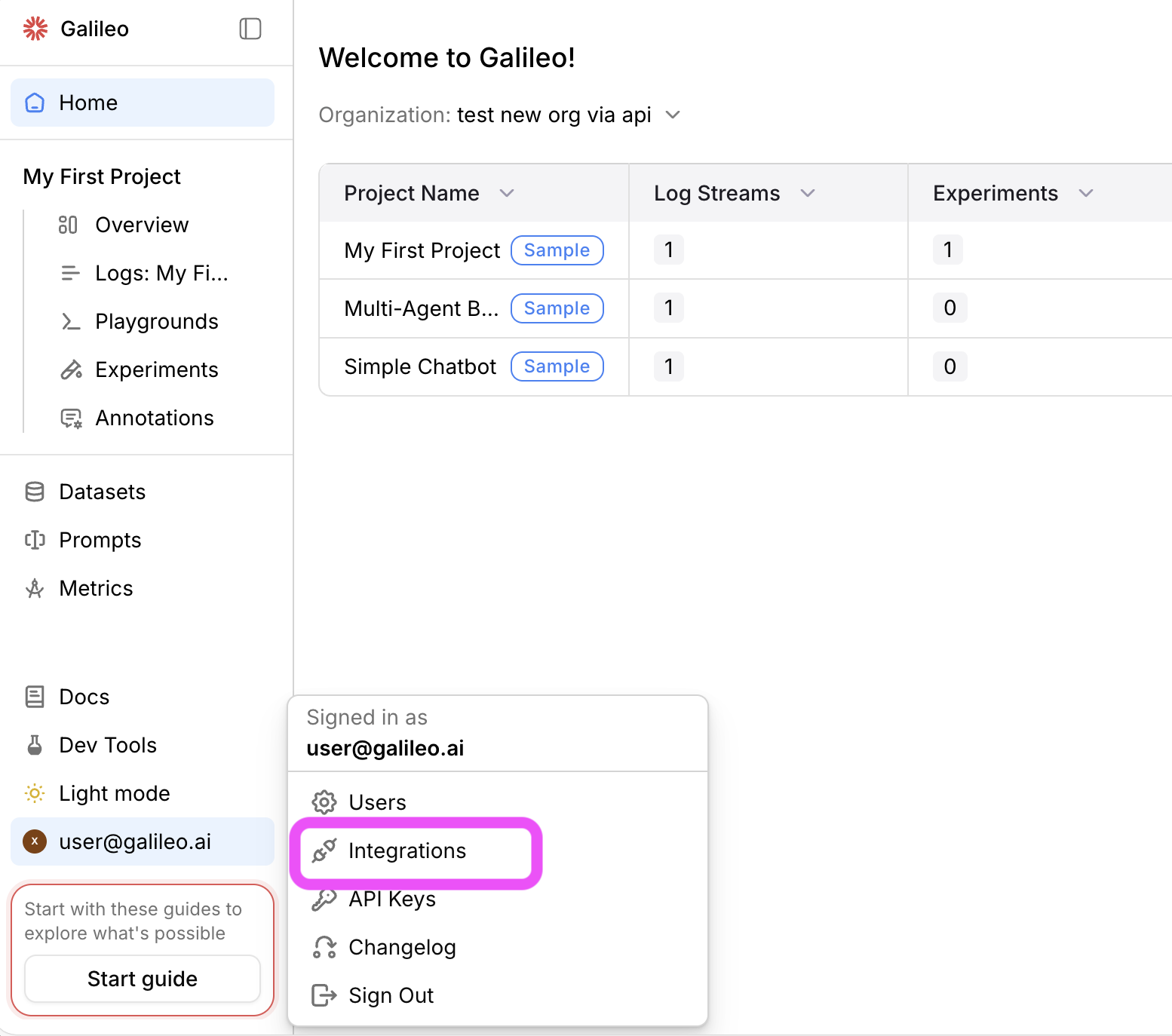

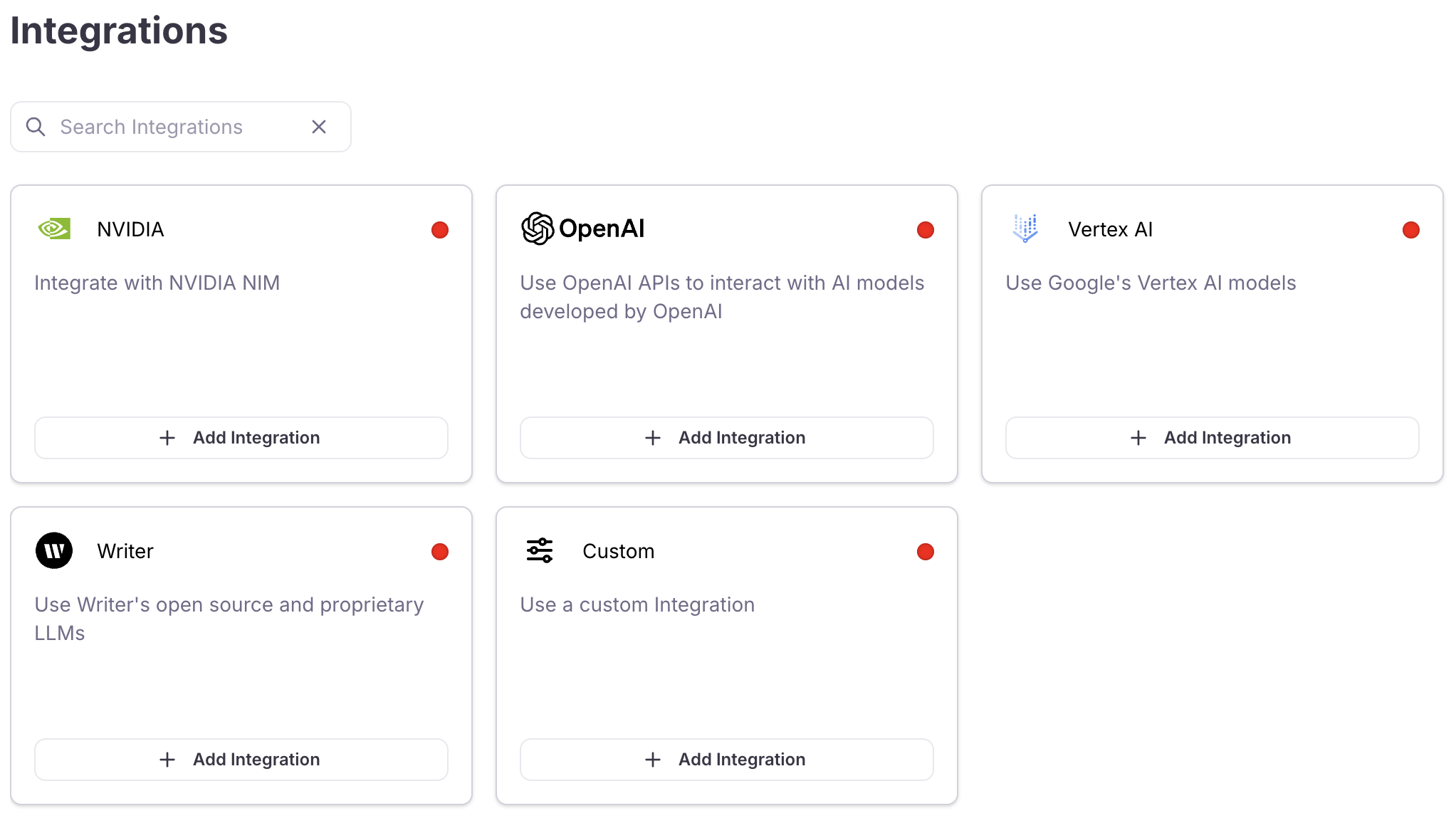

Prerequisite: Configure an LLM integration

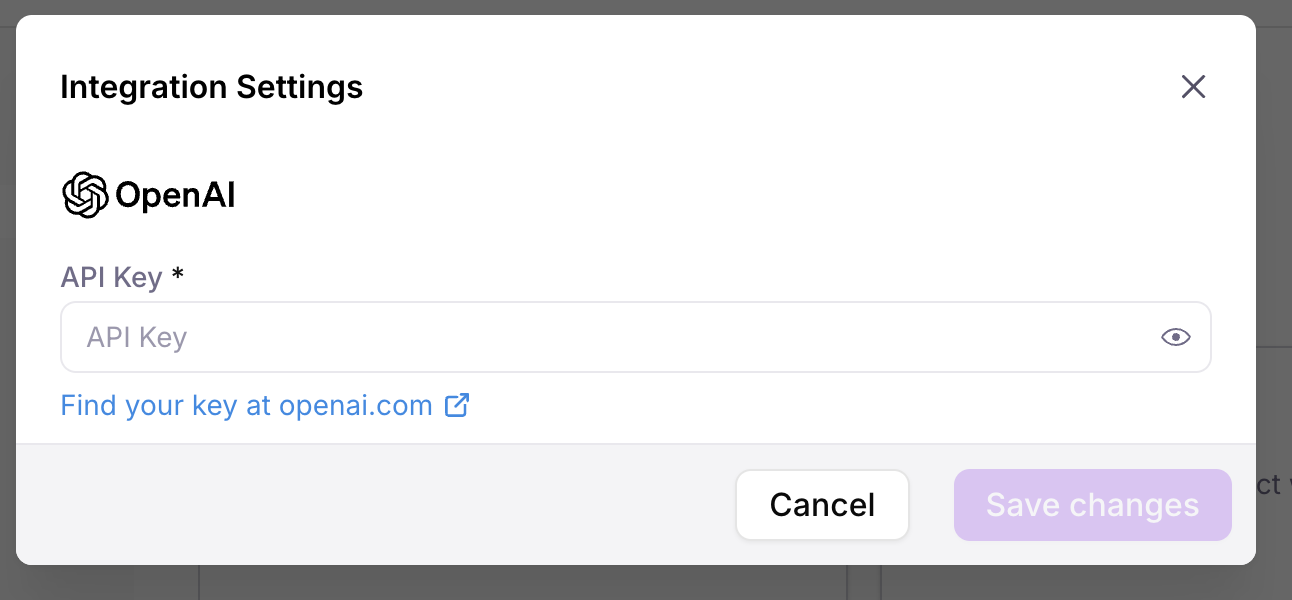

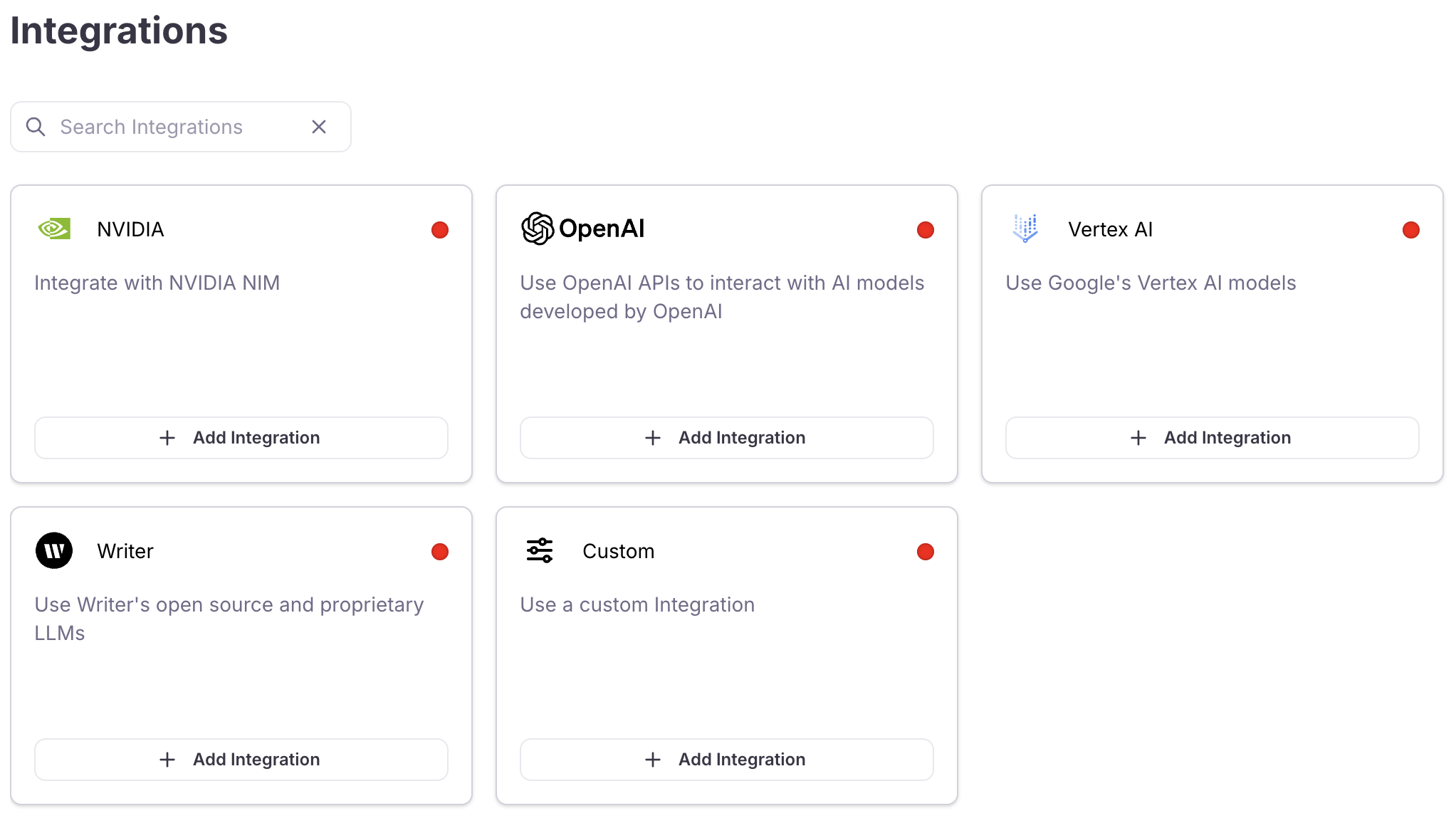

To run an experiment using a prompt and a dataset, you need to set up an LLM integration. An integration is also required to evaluate LLM outputs with metrics.Add an integration

Locate the LLM provider you are using (or specify a custom integration), then select the +Add Integration button.

Example experiment with code

Below is a step-by-step guide. Jump to the application code.Install dependencies

Install the Galileo SDK, and the dotenv package using the following command in your terminal:

Set up your environment variables

Create your application code

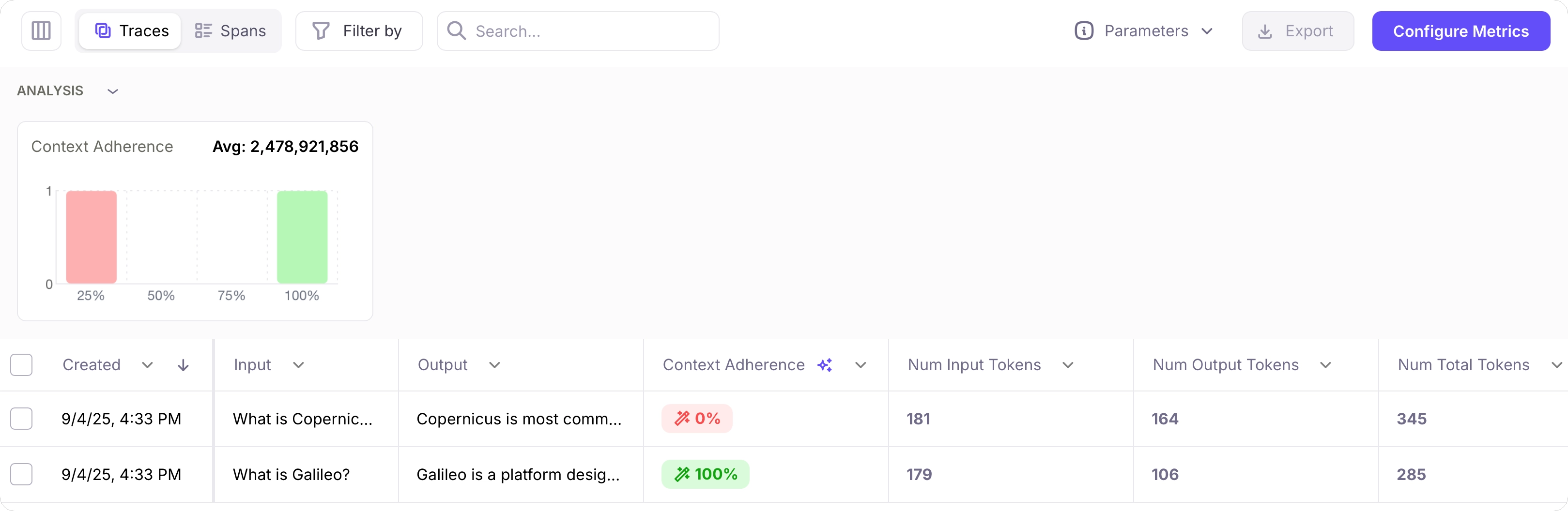

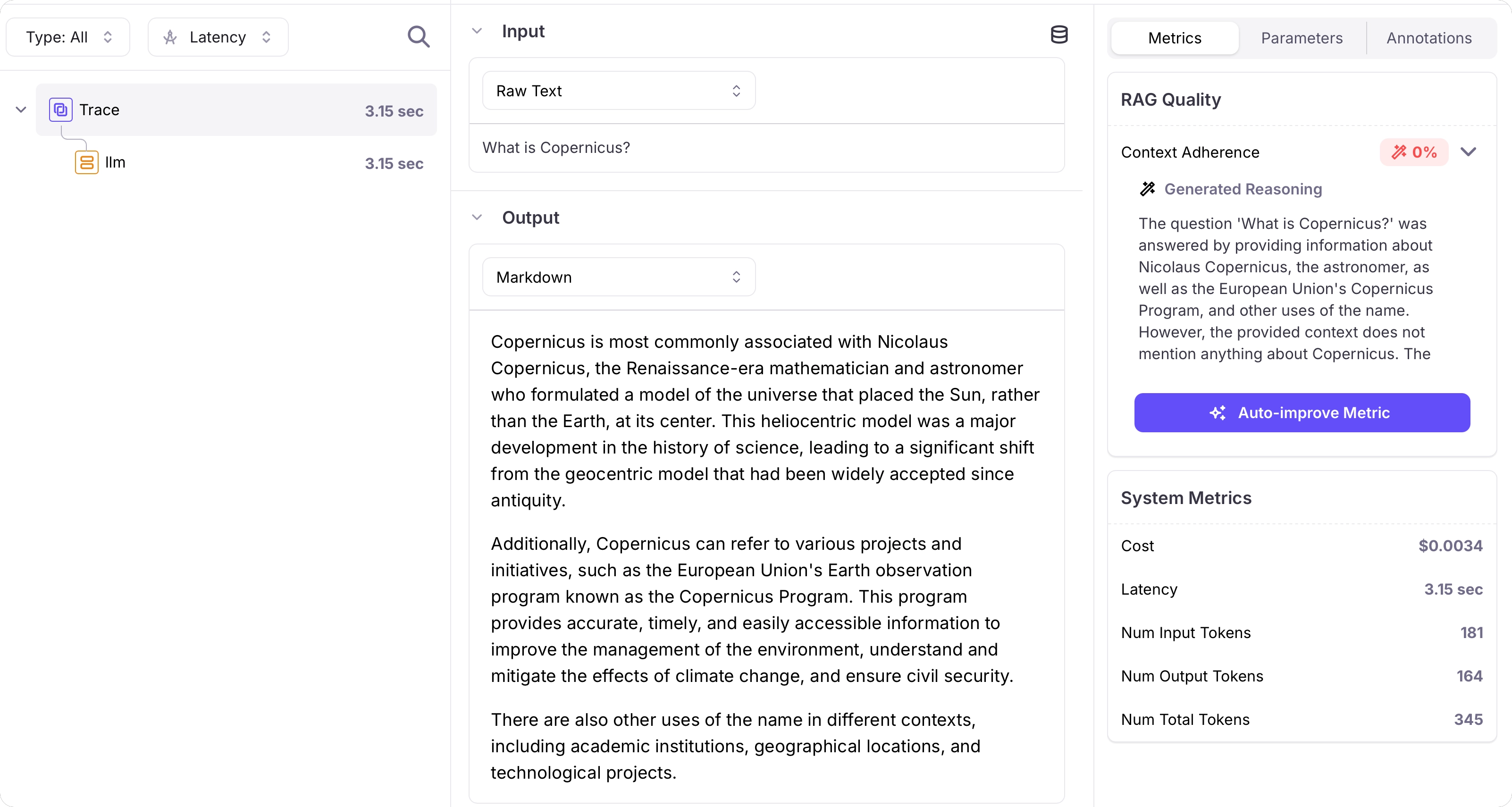

Create a file called This code creates a prompt containing a system prompt and user prompt, and the user prompt has a mustache template to inject rows from the dataset. It also creates a dataset.It then uses these to run an experiment, measuring context adherence.If the prompt or dataset already exist, they are loaded instead of being recreated.

app.py (Python) or app.ts (TypeScript) and add the following code:This code defaults to using

gpt-5-mini. If you want to use a different model, update the model_alias in the prompt settings passed to the call to run experiment.Troubleshooting

- I need a Galileo API key: Head to app.galileo.ai and sign up. Then head to the API keys page to get a new API key.

- What’s my project name ?: The project name was set when you created a new project. If you haven’t created a new project, head to Galileo and select the New Project button.

Next steps

Create a dataset

Learn how to create and manage datasets in Galileo.

Run experiments in playgrounds

Learn about running experiments in the Galileo console using playgrounds and datasets.

Run experiments with code

Learn how to run experiments in Galileo.

Compare experiments

Learn how to compare experiments in Galileo.