Configure Galileo for Out-of-the-Box and LLM-as-a-judge metrics

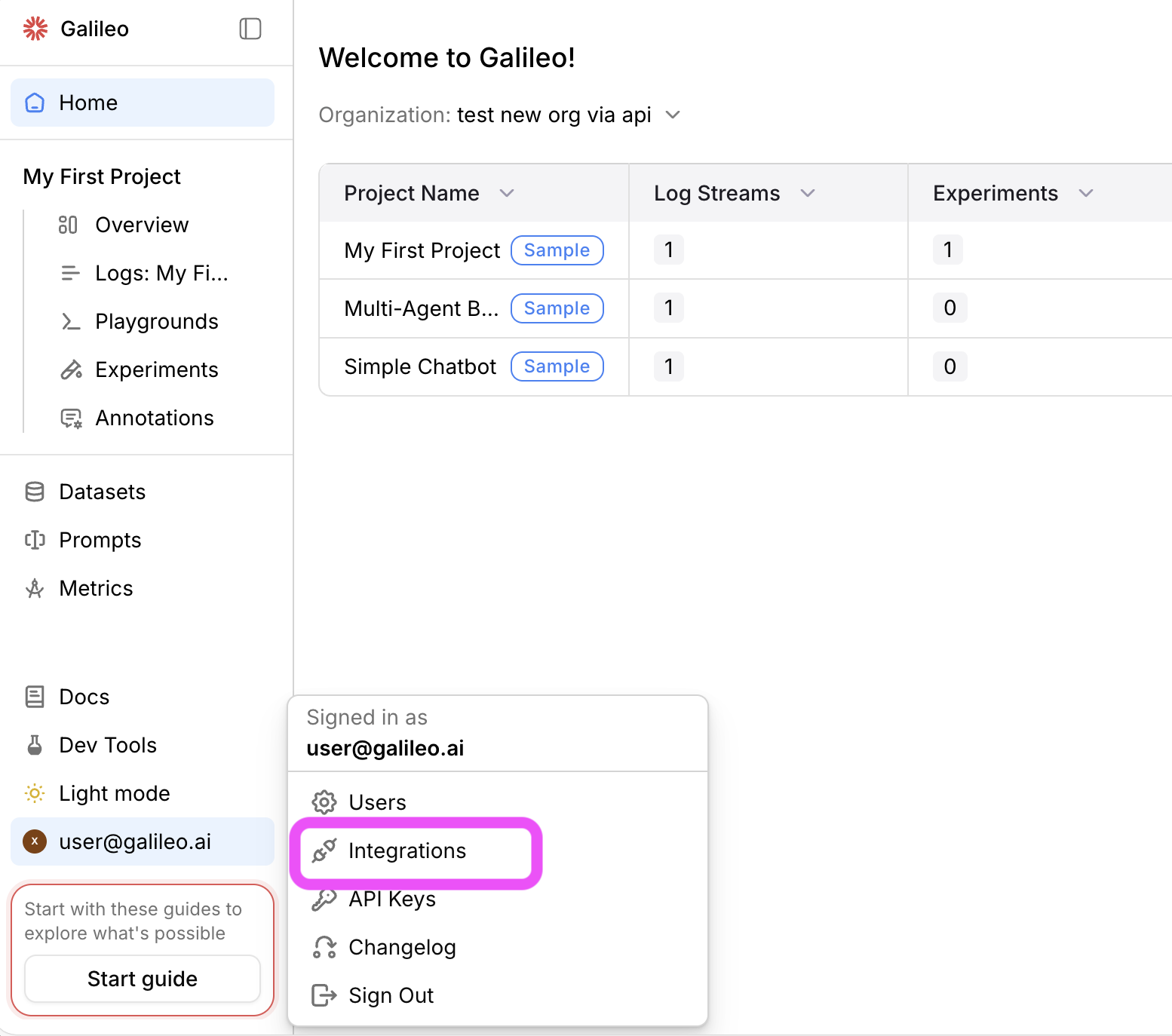

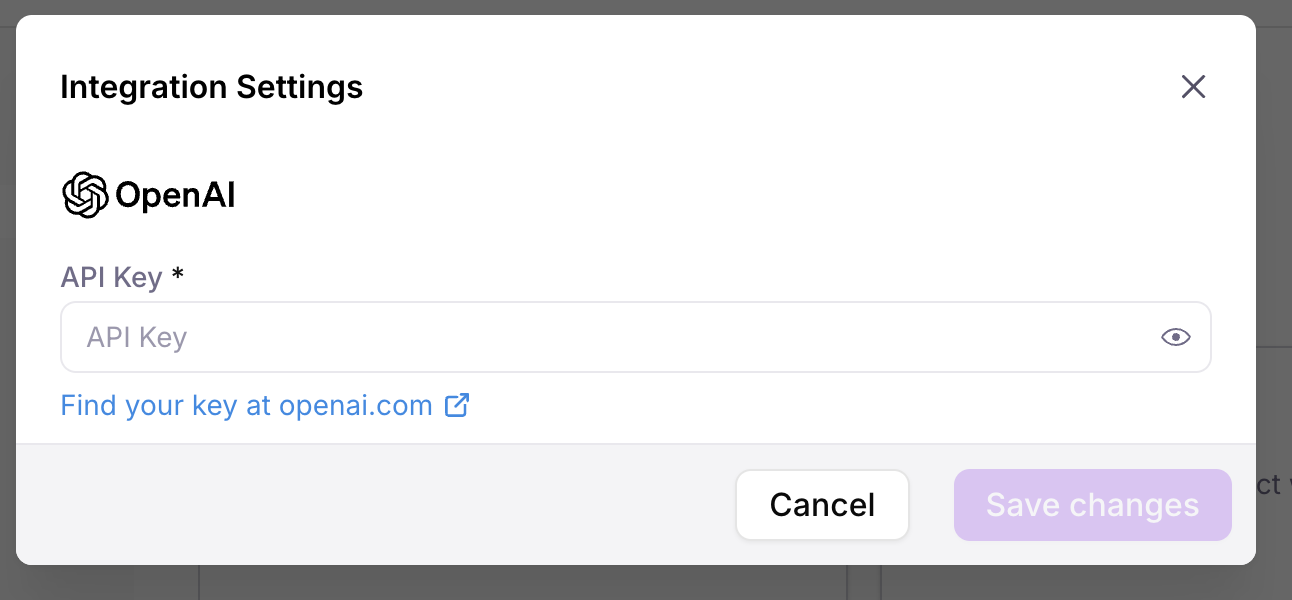

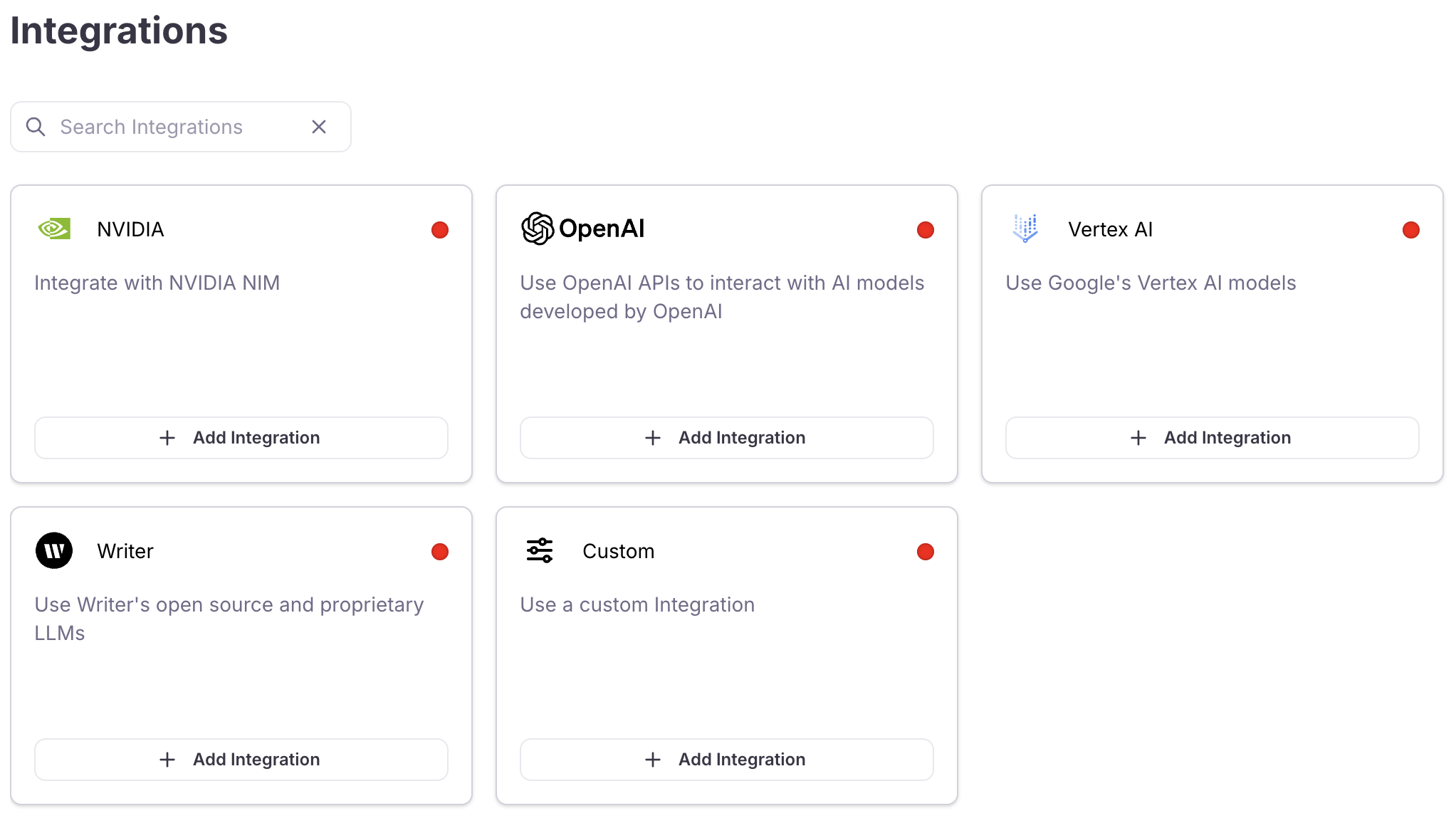

Most Out-of-the-Box metrics and all LLM-as-a-judge metrics are LLM-based metrics. LLM-based metrics use an LLM to evaluate inputs and outputs. To use these metrics from Log Streams or Experiments, you first need to configure an integration with an LLM platform.Add an integration

Locate the LLM provider you are using (or specify a custom integration), then select the +Add Integration button.

Using metrics effectively

To get the most value from Galileo’s metrics:- Start with key metrics - Focus on metrics most relevant to your use case

- Establish baselines - Understand your current performance before making changes

- Track trends over time - Monitor how metrics change as you iterate on your system

- Combine multiple metrics - Look at related metrics together for a more complete picture

- Set thresholds - Define acceptable ranges for critical metrics

- Improve the metrics - Use CLHF to continuously improve the metrics

Out-of-the-Box metric categories

Our metrics can be broken down into seven key categories, each addressing a specific aspect of AI system performance. Many times, folks benefit from using metrics from more than one category, depending on the metrics that matter most to them. Galileo also supports custom metrics that are able to be implemented alongside the Out-of-the-Box metric options. The Metrics Comparison provides a full list of the Out-of-the-Box metrics for each category.1. Agentic performance metrics

Agentic Performance Metrics evaluate how effectively AI agents perform tasks, use tools, and progress toward goals.| When to Use | Example Use Cases |

|---|---|

| When building and optimizing AI systems that take actions, make decisions, or use tools to accomplish tasks. |

|

2. Expression and readability metrics

Expression And Readability Metrics assess the style, tone, clarity, and overall presentation of AI-generated content.| When to Use | Example Use Cases |

|---|---|

| When the format, tone, and presentation of AI outputs are important for user experience or brand consistency. |

|

3. Model confidence metrics

Model Confidence Metrics measure how certain or uncertain your AI model is about its responses.| When to Use | Example Use Cases |

|---|---|

| When you want to flag low-confidence responses for review, improve system reliability, or better understand model uncertainty. |

|

4. Response quality metrics

Response Quality Metrics help you evaluate how correctly, consistently, and in line with ground truth your AI follows instructions and answers user queries in any setting — with or without RAG (correctness, instruction adherence, ground truth adherence).5. RAG metrics

RAG Metrics help you evaluate retrieval and generation quality in RAG pipelines, including retrieval quality (chunk relevance, context relevance, context precision, Precision @ K) and generation quality (chunk attribution utilization, context adherence, completeness).| When to Use | Example Use Cases |

|---|---|

| When evaluating how well retrieval systems find relevant context and how well models use that context to produce accurate, complete, and well-grounded responses. |

|

6. Safety and compliance metrics

Safety And Compliance Metrics identify potential risks, harmful content, bias, or privacy concerns in AI interactions.| When to Use | Example Use Cases |

|---|---|

| When ensuring AI systems meet regulatory requirements, protect user privacy, and avoid generating harmful or biased content. |

|

7. Text-to-SQL metrics

Text-to-SQL metrics evaluate the accuracy and effectiveness of SQL queries generated by AI models from natural language inputs.| When to Use | Example Use Cases |

|---|---|

| Use these metrics to evaluate the quality of SQL queries generated from natural language inputs. They’re essential when building Text-to-SQL systems that need to produce syntactically correct queries grounded in your database schema. |

|

Next steps

Custom LLM-as-a-judge metrics

Learn how to create evaluation metrics using LLMs to judge the quality of responses

Custom code-based metrics

Learn how to create, register, and use custom metrics to evaluate your LLM applications

Customizing Your LLM-Powered Metrics via CLHF

Learn how to customize your LLM-powered metrics with Continuous Learning via Human Feedback