Out-of-the-box metrics reference

The table below summarizes gives the constants used in code to access each metric. To use these metrics, import the relevant enum.LLM-as-a-judge Metrics

- Python

- TypeScript

| Metric | Enum Value |

|---|---|

| Action Advancement | GalileoMetrics.action_advancement |

| Action Completion | GalileoMetrics.action_completion |

| Agent Efficiency | GalileoMetrics.agent_efficiency |

| Agent Flow | GalileoMetrics.agent_flow |

| BLEU | GalileoMetrics.bleu |

| Chunk Attribution Utilization | GalileoMetrics.chunk_attribution_utilization |

| Completeness | GalileoMetrics.completeness |

| Context Adherence | GalileoMetrics.context_adherence |

| Context Precision | GalileoMetrics.context_precision |

| Context Relevance (Query Adherence) | GalileoMetrics.context_relevance |

| Conversation Quality | GalileoMetrics.conversation_quality |

| Correctness (factuality) | GalileoMetrics.correctness |

| Ground Truth Adherence | GalileoMetrics.ground_truth_adherence |

| Instruction Adherence | GalileoMetrics.instruction_adherence |

| PII (personally identifiable information) | GalileoMetrics.input_pii, GalileoMetrics.output_pii |

| Prompt Injection | GalileoMetrics.prompt_injection |

| Prompt Perplexity | GalileoMetrics.prompt_perplexity |

| ROUGE | GalileoMetrics.rouge |

| Sexism / Bias | GalileoMetrics.input_sexism, GalileoMetrics.output_sexism |

| Tone | GalileoMetrics.input_tone, GalileoMetrics.output_tone |

| Tool Errors | GalileoMetrics.tool_error_rate |

| Tool Selection Quality | GalileoMetrics.tool_selection_quality |

| Reasoning Coherence | GalileoMetrics.reasoning_coherence |

| SQL Correctness | GalileoMetrics.sql_correctness |

| SQL Adherence | GalileoMetrics.sql_adherence |

| SQL Injection | GalileoMetrics.sql_injection |

| SQL Efficiency | GalileoMetrics.sql_efficiency |

| Toxicity | GalileoMetrics.input_toxicity, GalileoMetrics.output_toxicity |

| User Intent Change | GalileoMetrics.user_intent_change |

Luna-2 metrics

If you are using the Galileo Luna-2 model, then use these metric values.- Python

- TypeScript

| Metric | Enum Value |

|---|---|

| Action Advancement | GalileoMetrics.action_advancement_luna |

| Action Completion | GalileoMetrics.action_completion_luna |

| Chunk Attribution Utilization | GalileoMetrics.chunk_attribution_utilization_luna |

| Completeness | GalileoMetrics.completeness_luna |

| Context Adherence | GalileoMetrics.context_adherence_luna |

| PII (personally identifiable information) | GalileoMetrics.input_pii, GalileoMetrics.output_pii |

| Prompt Injection | GalileoMetrics.prompt_injection_luna |

| Sexism / Bias | GalileoMetrics.input_sexism_luna, GalileoMetrics.output_sexism_luna |

| Tone | GalileoMetrics.input_tone, GalileoMetrics.output_tone |

| Tool Errors | GalileoMetrics.tool_error_rate_luna |

| Tool Selection Quality | GalileoMetrics.tool_selection_quality_luna |

| Toxicity | GalileoMetrics.input_toxicity_luna, GalileoMetrics.output_toxicity_luna |

| Uncertainty | GalileoMetrics.uncertainty |

How do I use metrics in experiments?

Therun experiment function (Python, TypeScript) takes a list of metrics as part of its arguments.

Preset metrics

Supply a list of one or more metric names into therun_experiment function as shown below:

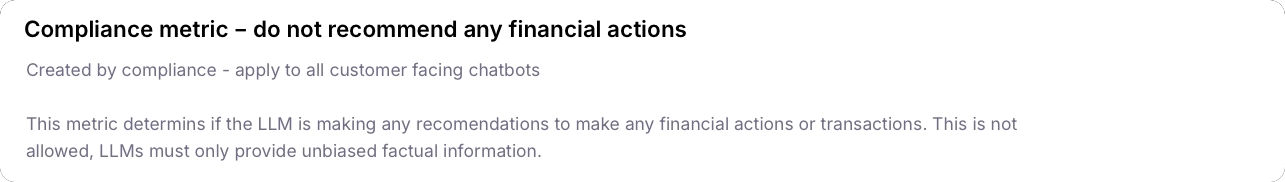

Custom metrics

You can use custom metrics in the same way as Galileo’s preset metrics. At a high level, this involves the following steps:- Create your metric in the Galileo Console (or in code). Your custom metric will return a numerical score based on its input.

- Pass the name of your new metric into the

run experiment, like in the example below.

"Compliance - do not recommend any financial actions":

Ground truth data

Ground truth is the authoritative, validated answer or label used to benchmark model performance. For LLM metrics, this often means a gold-standard answer, fact, or supporting evidence against which outputs are compared. The following metrics require ground truth data to compute their scores, as they involve direct comparison to a reference answer, label, or fact.These metrics are only supported in experiments, as they require the ground truth to be set in the dataset used by the experiment.

Are metrics LLM-agnostic?

Yes, all metrics are designed to work across any LLM integrated with Galileo.Next steps

Metrics Overview

Explore Galileo’s comprehensive metrics framework for evaluating and improving AI system performance across multiple dimensions.

Experiments Overview

Learn how to use datasets and experiments to improve your application.

Run experiments

Learn how to run experiments in Galileo using the Galileo SDKs and custom metrics.