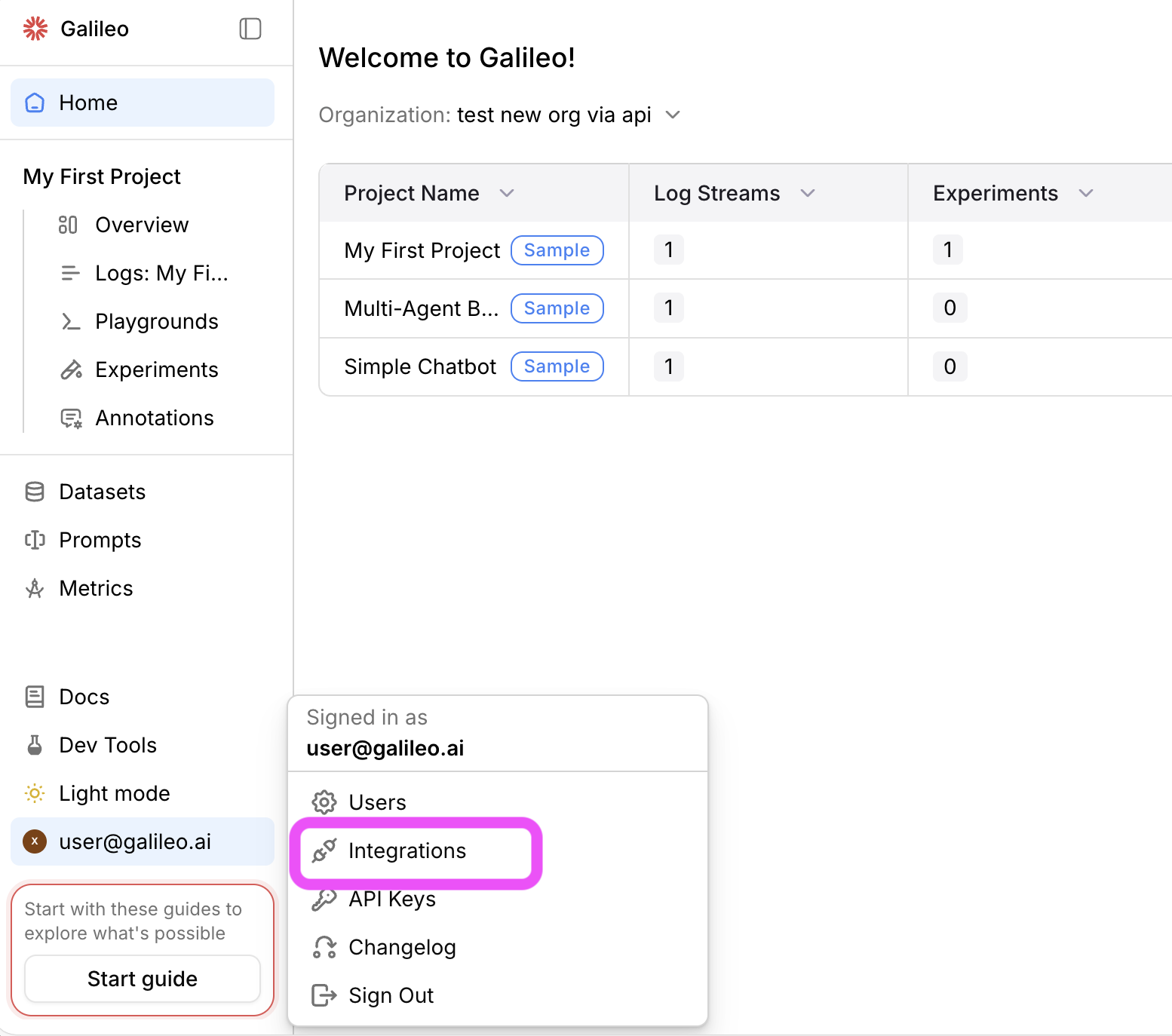

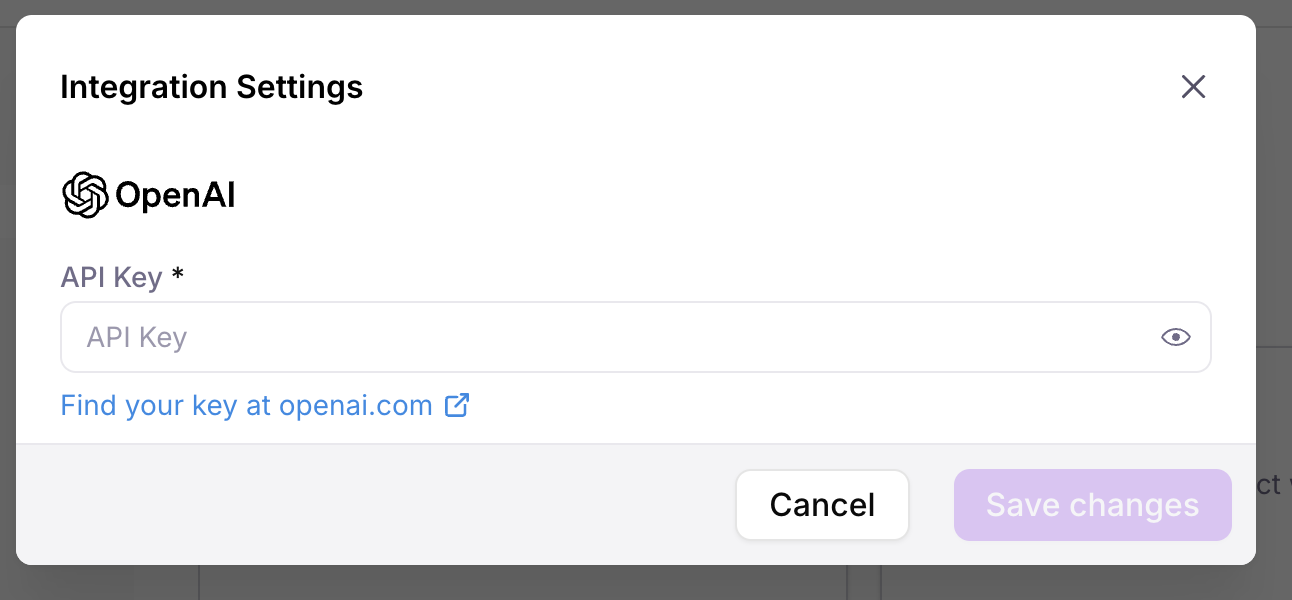

Configure an LLM integration

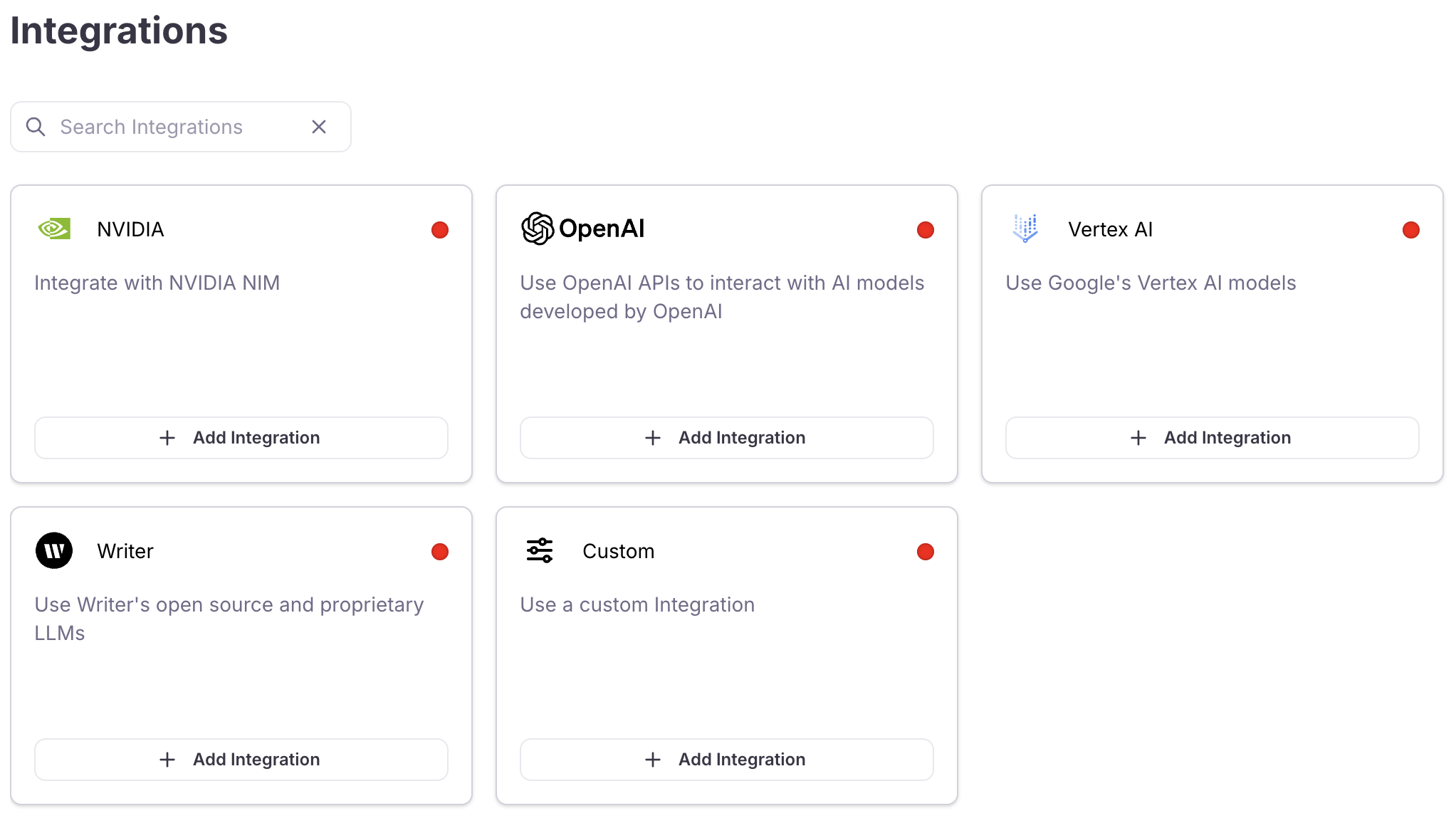

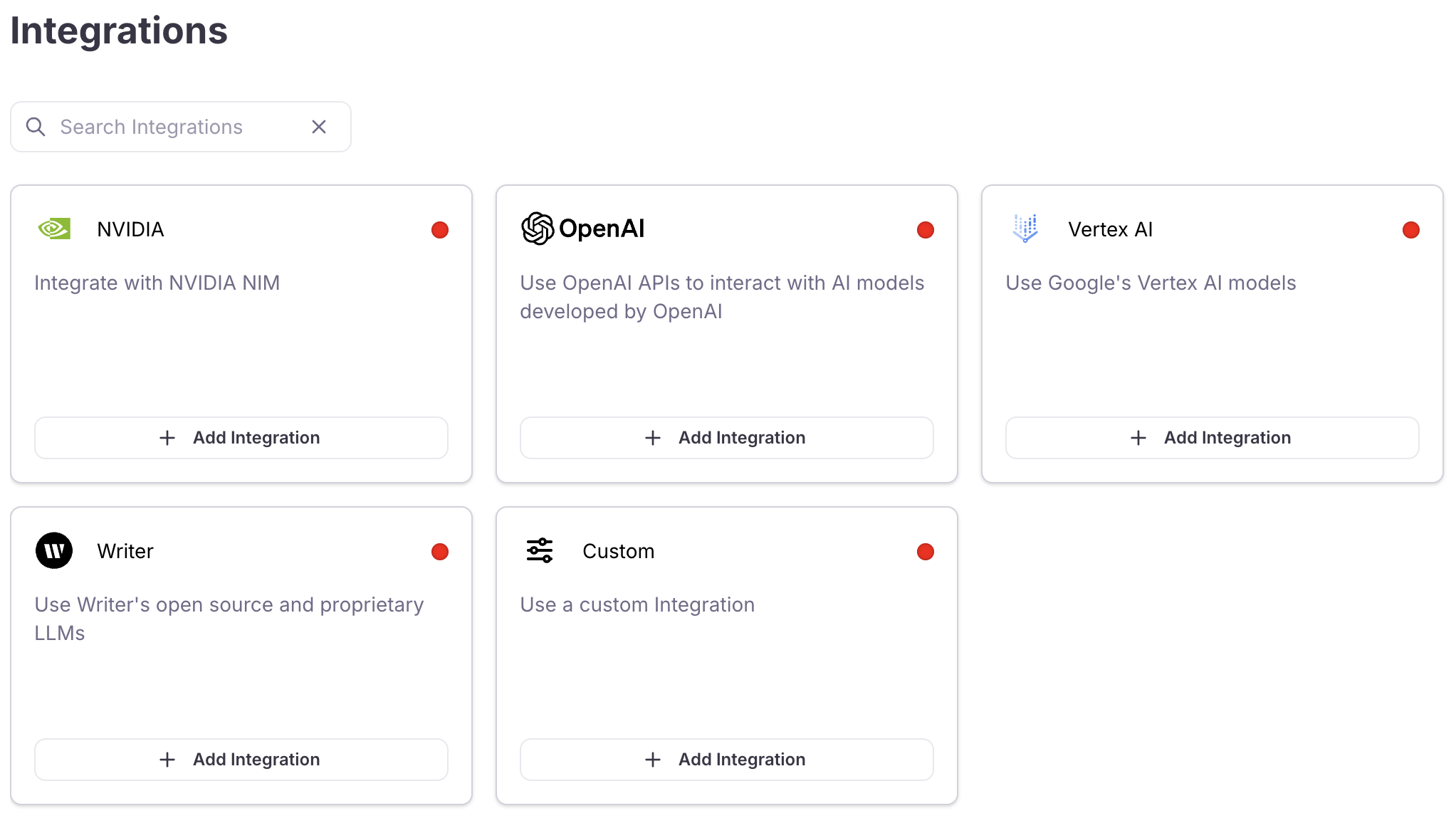

To evaluate metrics, you need to set up an LLM integration for the LLM that will be used as a judge.Add an integration

Locate the LLM provider you are using (or specify a custom integration), then select the +Add Integration button.

Log a trace with an evaluated metric

Enable the context adherence metric on your Log stream

To evaluate the Log stream against context adherence, you need to turn this on for your Log stream.Add the following import statements to the top of your app file:Next add the following code to your app file. If you are using Python, add this after the call to This code will enable the context adherence metric for your Log stream, and this metric will then be calculated for all LLM spans that are logged.

galileo_context.init(). If you are using TypeScript, add this as the first line in the async block.Run your application

Now that you have metrics turned on for your Log stream, re-run your application to generate another trace. This time the context adherence metric will be calculated.

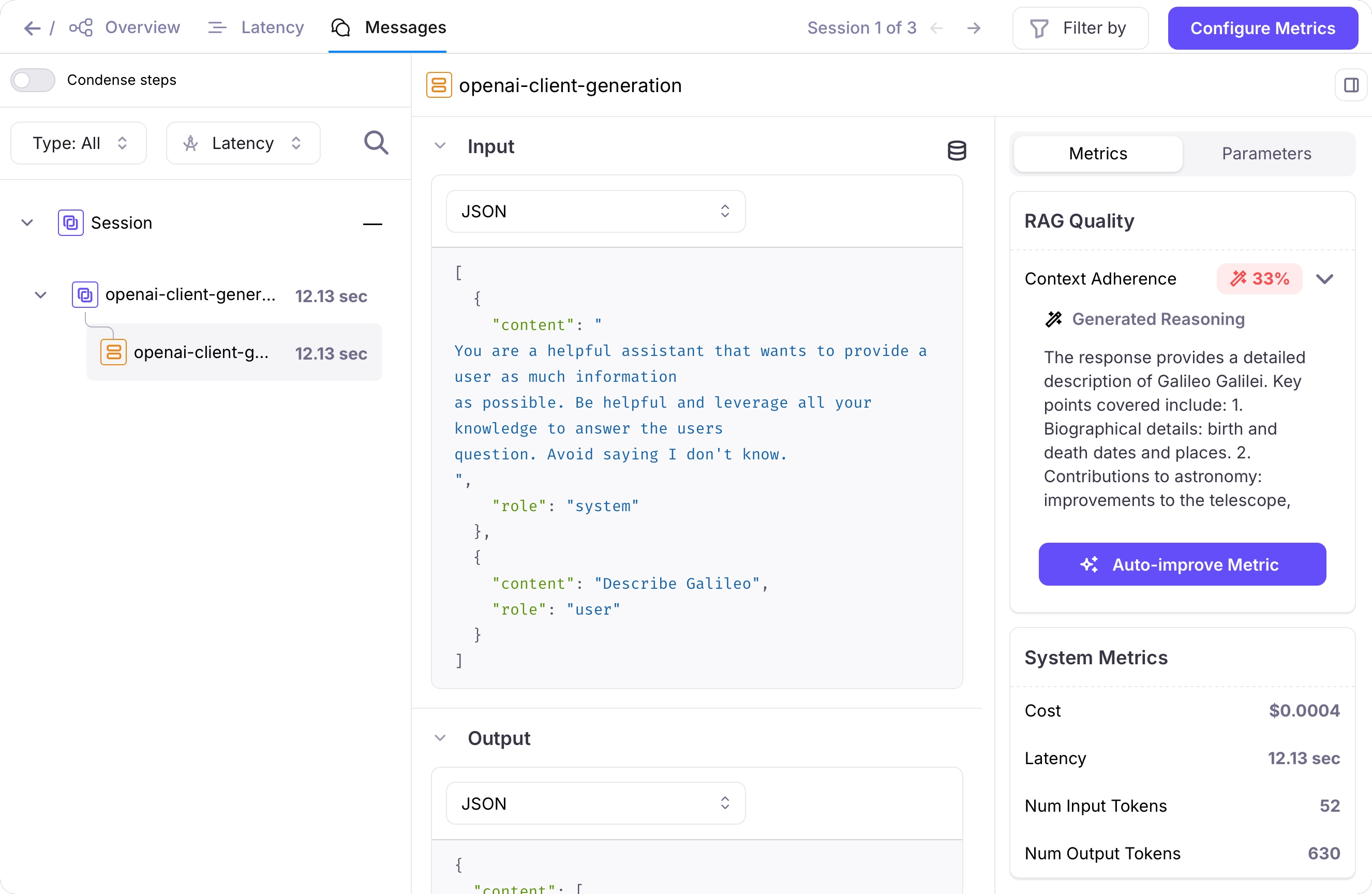

Open the Log stream in the Galileo console

In the Galileo console, select your project, then select the Log stream.

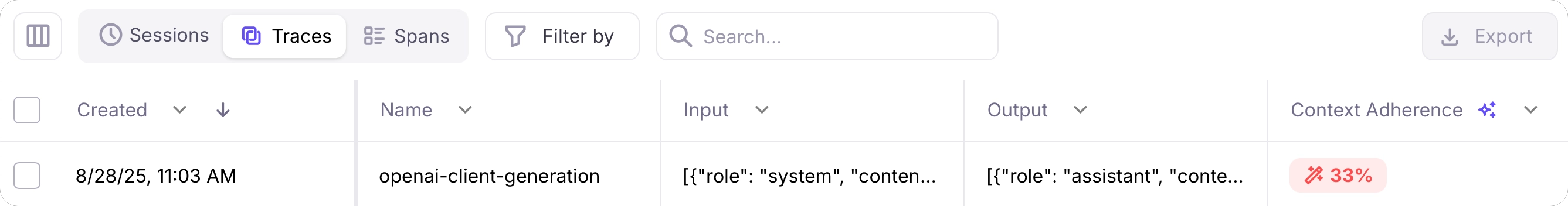

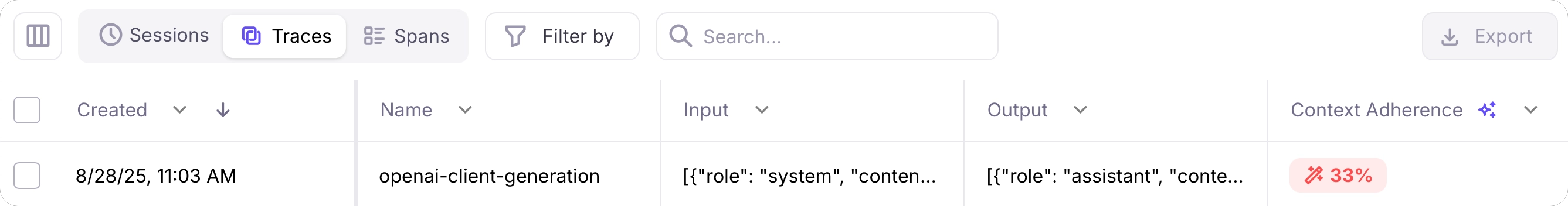

Select the Traces tab

You can see the trace that was just logged in the Traces tab. The context adherence metric will be calculated, showing low score.

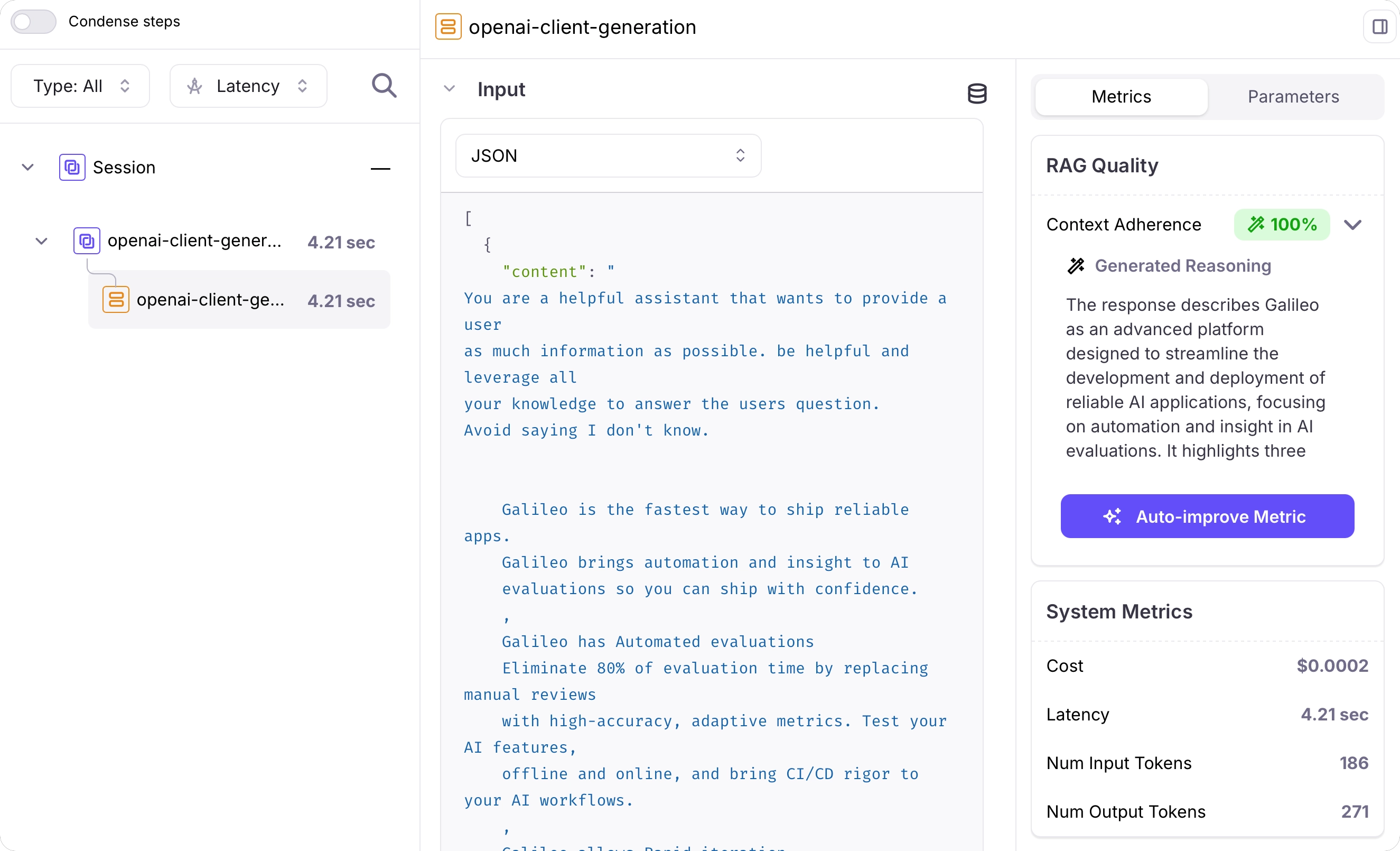

Improve your application

To improve the context adherence score, you can provide relevant context to the LLM in the system.Add relevant context to your system prompt

To improve the context adherence, you can add relevant context to the system prompt. This is similar to adding extra information from a RAG system.Update your code, replacing the code to set the system prompt with the following:

Next steps

Sample projects

Learn how to get started with the Galileo sample projects that are included in every new account.

Integrate with third-party frameworks

Learn about the Galileo integrations with third-party SDKs to automatically log your applications

Cookbooks

Cookbooks

Learn how to perform common tasks with Galileo, work with third-party integrations, and use evaluations to solve AI problems

SDK reference

Python SDK Reference

The Galileo Python SDK reference.

TypeScript SDK Reference

The Galileo TypeScript SDK reference.